The password G7$kL9#mQ2&xP4!w looks unbreakable. Every password checker rates it "excellent." Researchers just proved 18 different people got the exact same one from Claude. Here's why AI-generated passwords are a security disaster in disguise.

A new study just confirmed what I've suspected for a long time: asking an AI chatbot to generate your password is one of the worst security decisions you can make. Not because the passwords look weak. They look great. Password checkers rate them "excellent." They have uppercase letters, numbers, symbols, and the right length. The problem runs deeper than aesthetics.

I want to walk you through exactly what researchers found, what it means for your security, and why this matters even if you've never once asked ChatGPT for a password.

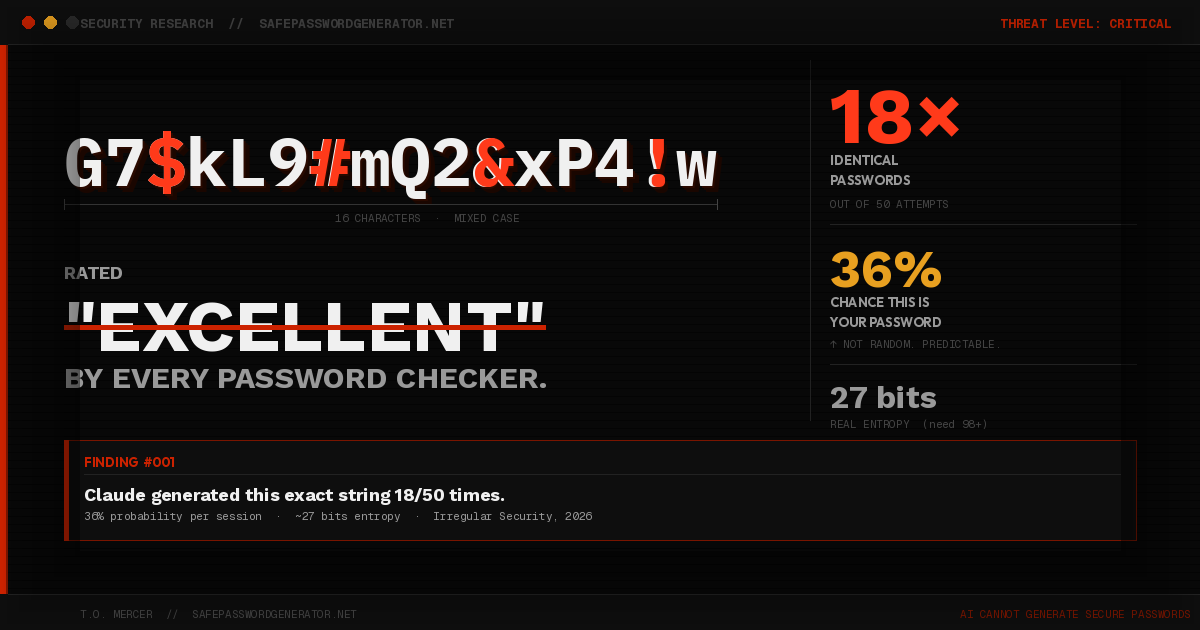

Meet G7$kL9#mQ2&xP4!w: The Password 18 People Got From Claude

Frontier AI security firm Irregular ran a simple but damning test. They asked Claude, ChatGPT, and Gemini to each generate 50 passwords. Then they analyzed what came back.

With Claude Opus, one password appeared 18 times out of 50 attempts: G7$kL9#mQ2&xP4!w. That gives it a 36% probability of being generated in any given session. Paste it into any password strength checker right now and you'll get a perfect score. Zero warnings. No red flags.

The full findings:

- 50 passwords generated by Claude Opus. Only 30 were unique. 20 were duplicates.

- 18 of those duplicates were the exact same string: G7$kL9#mQ2&xP4!w.

- Nearly every password started with an uppercase letter, almost always "G." Second character: almost always "7."

- Characters like "L," "9," "m," "2," "$," and "#" appeared across virtually every output. Most of the alphabet never showed up at all.

- A secure 16-character password should carry roughly 98 bits of password entropy. These averaged around 27 bits.

G7$kL9#mQ2&xP4!w scores "excellent" on every standard checker. In practice, an attacker who knows how Claude generates passwords could target it directly. The strength rating is meaningless.

Why This Happens: It's Not a Bug

This is not a bug. It's not a fluke. It's fundamental to how large language models work.

AI models are trained to predict the next most likely token given everything before it. They are optimized for plausibility, coherence, and pattern matching. These are great traits for writing emails or answering questions. They are exactly the wrong traits for generating passwords.

A truly secure password requires a cryptographically secure pseudorandom number generator (CSPRNG): specialized software designed to produce outputs that are statistically uniform and unpredictable. No token probabilities. No training bias. No learned patterns. Pure, unbiased randomness.

An AI model cannot replicate this. When you ask Claude for a "random secure password," you get something that follows deeply predictable rules. The output, G7$kL9#mQ2&xP4!w, is not random. It is a statistical artifact of how Claude was trained.

The weakness is invisible unless you know to test for it. Standard password checkers evaluate structure: length, character types, no dictionary words. They cannot evaluate the entropy source. They cannot tell whether the generator was a CSPRNG or a language model with a bias toward "G7."

The Bigger Risk: It's Hiding Inside Code

Most people assume this only affects users who type "generate a secure password" into a chatbot. That group is real, but it is a fraction of the exposure.

The larger risk is in code. AI coding assistants write millions of lines every day. When those agents need to generate a password, encryption key, or session token, they sometimes produce it using direct LLM output instead of calling a proper CSPRNG. The resulting code looks clean. It passes code review. It gets deployed to production, quietly insecure, rated "excellent" by every automated scanner.

Irregular notes this is especially concerning given that Anthropic's CEO predicted AI may soon write essentially all code. If that code contains credentials like G7$kL9#mQ2&xP4!w baked in by a coding agent, the attack surface is enormous and nearly invisible.

What Secure Password Generation Actually Looks Like

The fix is not complicated, but it requires the right tool. Legitimate password generators use OS-level or hardware-backed randomness, properly seeded CSPRNGs, no training data, no learned patterns, no token probabilities. The output carries full entropy. No two users will consistently get the same result. There is no "G7" bias baked in.

This is exactly why dedicated password managers exist. It is not about features or UI. It is about the underlying randomness architecture.

Irregular's recommendation: users should avoid LLM-generated passwords entirely. Developers should route password generation through secure libraries. AI labs should disable direct credential generation by default.

What You Should Do Right Now

- Stop using AI chatbots to generate passwords, even if the output looks strong. G7$kL9#mQ2&xP4!w looked strong too.

- Use a dedicated password manager with a built-in generator. Bitwarden, NordPass, and 1Password all use proper CSPRNGs.

- If you write or review code, audit any AI-generated credential logic and replace it with language-appropriate secure random functions.

- If you manage a team, add LLM-generated credentials to your code review checklist.

Use our free client-side generator. Nothing is sent to any server; all randomness happens in your browser with the Web Crypto API.

Generate Password →The full research is available at irregular.com/publications/vibe-password-generation. Worth reading if you want the technical depth.

Frequently Asked Questions

Can AI generate secure passwords?

No. AI chatbots like Claude, ChatGPT, and Gemini cannot generate cryptographically secure passwords. Research by Irregular found that Claude produced the same password (G7$kL9#mQ2&xP4!w) 18 times out of 50 attempts, giving it a 36% probability. LLMs are trained to predict plausible patterns, the opposite of true randomness. Use a dedicated password manager instead.

What is a vibe password?

A vibe password is a term coined by Irregular (Frontier AI Security) for passwords generated directly by large language models. They look complex and rate as "excellent" on standard checkers but are fundamentally weak because LLMs produce statistically predictable outputs rather than cryptographically random ones.

Is G7$kL9#mQ2&xP4!w a secure password?

No. Despite appearing strong and scoring "excellent" on password checkers, G7$kL9#mQ2&xP4!w is not secure. It was generated by Claude 18 times out of 50 attempts in a 2026 study by Irregular. Any attacker who knows AI password generation patterns could target it directly. Its real-world entropy is approximately 27 bits, far below the 98 bits a truly random 16-character password carries.

What should I use instead of AI to generate passwords?

Use a dedicated password manager with a built-in generator such as Bitwarden, NordPass, or 1Password. These tools use cryptographically secure pseudorandom number generators (CSPRNGs) that produce truly random, high-entropy passwords with no predictable patterns.

T.O. Mercer covers password security, digital hygiene, and applied cryptography at SafePasswordGenerator.net.